SUMMARY

This is AI generated summarization, which may have errors. For context, always refer to the full article.

Part 2

Read Part 1: Propaganda war: Weaponizing the internet

MANILA, Philippines – 34-year-old Mocha Uson is a singer-dancer who grew her Facebook page with sex advice and sessions in the bedroom with her all-girl band, the Mocha Girls.

Their trademark gyrations and near-explicit sexual moves onstage have titillated Filipinos since 2006, and have caused controversy from sex to politics – from a controversial kiss in 2008 after Katy Perry’s hit single was released; to a campaign for the Reproductive Health Bill for sex education and access to contraceptives; to a defense of twerking at political rallies last year.

For the 2016 Philippine presidential elections, Uson campaigned hard for her candidate, then Davao City Mayor Rodrigo Duterte, onstage and on her Facebook page, which became one of his campaign’s most effective online political advocacy tool. (Most of the sex-themed videos have been deleted from Facebook, but they remain on YouTube).

Soon after Duterte won, Uson completed her pivot from sexy entertainer to political blogger with an interview with the President-elect.

She hit the headlines again in August when news leaked that she was going to be a social media consultant at the Bureau of Customs. That didn’t happen largely because of a public outcry that ridiculed her lack of knowledge.

Uson came back and dared her critics to volunteer for government, alleged the first of many conspiracy theories, and strengthened her own attacks against anyone who challenged – or just questioned – President Duterte.

When Duterte boycotted media for two months, traditional journalists became one of her favorite targets – individually and organizationally.

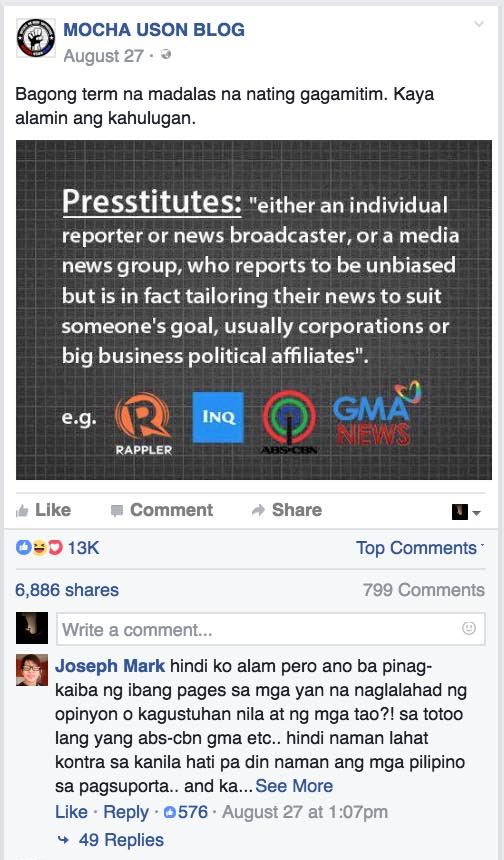

Meet the ‘Presstitutes’

One of her often shared memes is “Presstitutes” – a wordplay of press + prostitute, alleging corruption against the Philippines’ top news groups, ABS-CBN, GMA-7, the Philippine Daily Inquirer, and Rappler.

Other favorite targets are Senator Leila de Lima and Senator Antonio Trillanes IV.

In every attack, Uson provides no evidence for her ad hominem accusations, but they have been shared so often that many believe they are true.

“What started out as a lie or half-truth becomes the truth by the time it reaches the general public,” said Vincent Lazatin, Executive Director of the Transparency & Accountability Network, said in a recent panel on Technology and the Public Debate at Rappler’s #HackSociety.

Uson declined our request for an interview.

In a September, 2016 post, she boasted that her Facebook page, which has more than 4 million followers, has surpassed the engagement metrics of top media groups in the Philippines.

That simple action amplifies the network effect and makes their entire collective more powerful. Her Facebook page is the lynchpin of a sophisticated pro-Duterte propaganda machine: in one post, she gives instructions for supporters to follow other Duterte political advocacy pages and supporters as well as anonymous blogs.

Part 1 of our series, Propaganda war: Weaponizing the internet, focused on the bots – or automated programs – that attacked users based on key words, as well as networks of paid trolls and fake accounts that proliferated in the past year.

“You spoke about how artificial intelligence is here now,” Lazatin said, “but what we’re seeing on the internet is a different kind of AI, which is Artificial Ignorance. The bots, the algorithms and the paid trolls, they are purveyors of Artificial Ignorance.”

Deepening the network effect

This piece looks at how these paid initiatives interact with real people and the impact of Facebook’s algorithms on our democracies.

To deepen the network effect, these bots, fake accounts, and anonymous pages anchor on real people like Uson, whose page helps amplify those accounts’ reach.

Uson’s page, in return, becomes more powerful because of the increased engagement and invisible web of actions that connect her advocacy page to the others, taking advantage of the actions rewarded by Facebook’s algorithms.

That means their collective messages reach – and convince – more people, an effective way to create a social movement.

Algorithms: Gatekeeper that can’t tell fact from fiction

Facebook’s algorithms, created in a black box, are extremely powerful in shaping reality and creating echo chambers that could be harmful to democracy.

They cater to our weaknesses, what psychologists call cognitive bias – when we unconsciously gravitate towards those who echo what we believe.

“You’re not seeing everything,” Stephanie Sy, founder of Thinking Machines Data Science, said on the September 26 #HackSociety panel on Technology and the Public Debate. “You’re seeing what Facebook thinks you’re most likely to interact with, and if you interact with something, you’ll see more and more and more of that. And that naturally pushes people into social bubbles that don’t talk to each other and engage with each other.”

Observers say the echo chamber effect became more pronounced after Instant Articles began last year, putting more news articles alongside updates from your family and friends.

With 1.7 billion active monthly users worldwide, Facebook is effectively the world’s largest news source, the umbrella organization where nearly all news content flows.

Its algorithms decide what you see on your feed.

“In many ways, the algorithm has become an editor,” said Dr. Jeffrey Herbst, President and CEO of the Newseum in Washington, D.C. to a September East-West Centre annual gathering of international journalists in New Delhi. That is a loaded phrase for traditional journalists because the editor exercises the gatekeeping function that once gave news groups its power to shape the national narrative.

Given that Facebook-owned platforms, including Instagram, Messenger and WhatsApp, reach 86% of internet users aged 16 to 64 in 33 countries and that 44% of people across 26 countries say they use it for news, that algorithm determines reality.

This is more significant, said Herbst, than the emergence of news websites.

One potentially fatal flaw for democracies? The algorithms don’t distinguish fact from fiction.

This partly explains why Uson’s posts can compete – and, often, beat – news groups. All it takes is for a committed group to organize click farms and trolls, paid or not. Or be outlandishly controversial, quite easy if truth doesn’t matter.

Emotional call-to-action posts in a heated political environment get a lot of engagement, the key metric for social media.

“Facebook’s goal as a company that seeks to make money for shareholders is to make sure that people stay on its platform as long as possible and read as many posts as possible so that they can sell as many ads as possible at a higher price,” Herbst reminded journalists.

US elections

It’s not just happening in the Philippines. During one of America’s most heated election campaigns, Facebook is where most political discourse is happening.

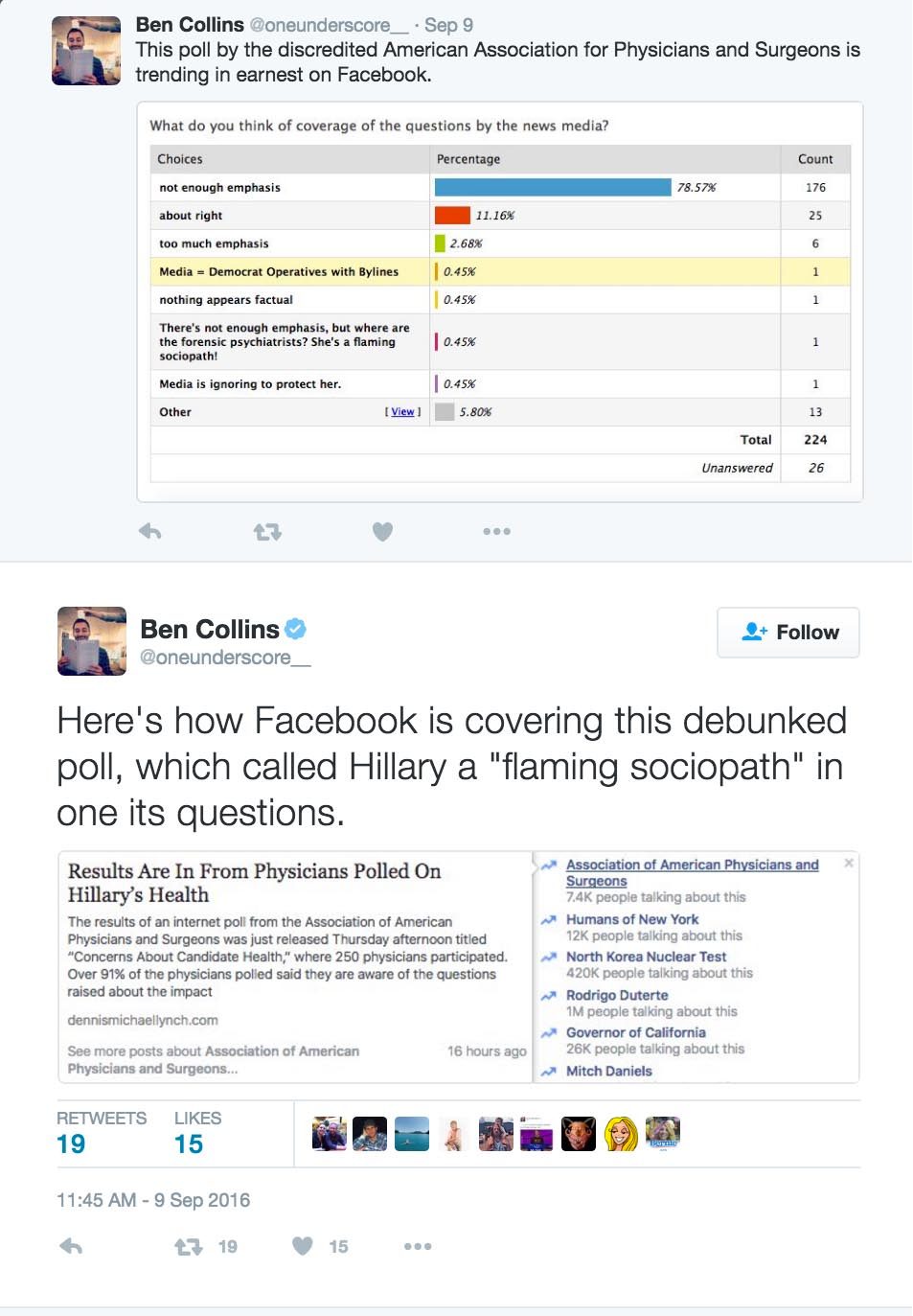

On September 9, for example, Facebook promoted in its Trending News section a poll of “doctors” which found Hillary Clinton was “a flaming psychopath.”

This claim isn’t true, and the group that posted it was behind numerous internet conspiracy theories that had already been debunked.

Shortly before that, Facebook decided to end human oversight over the algorithms after a controversy that conservative articles were not getting enough visibility on the news feed.

For the social media giant, sometimes it’s damned if you do, damned if you don’t.

“If Facebook were to start limiting what people could say on Facebook, it very easily falls into freedom of speech issues, which are already an issue that they deal with from the other side,” Sy reminded the audience, citing an iconic photo recently censored by Facebook.

The “Napalm Girl” won the Pulitzer Prize in 1972 – a photo of a 9-year old girl whose clothes have been burned away by a napalm attack in Vietnam. (Facebook changed that policy shortly after.)

“This is just the tip of the iceberg as people across the world understand the power of these platforms and how important they are in determining what people know,” Herbst told international journalists in New Delhi.

Greater impact in emerging democracies

You could argue that Facebook’s impact is even greater in developing economies in Southeast Asia. In countries like Indonesia and Myanmar, Facebook is synonymous with the internet.

In the Philippines, where the median age of its 100 million people is 23 years old, more than 96% of Filipinos on the internet are on Facebook.

“People are more confused than enlightened these days, and I can only blame technology,” said Vergel Santos, chairman of the board of the Center for Media Freedom and Responsibility at #HackSociety’s panel on Technology and the Public Debate.

The elections also seemed to unleash anger and hatred, made easier on social media. Real issues of marginalization, a wide gap between the rich and the poor, along with many other perceived injustices are ignited by a leader who often threatens violence.

“Everybody’s worry on social media is all of the bullying and all of the bad behavior that comes with it,” UP Communications Professor Clarissa David told me in an interview last May, specifically mentioning sexist and/or misogynistic attacks.

David warned of a possible spiral of silence that is changing the quality of our public discourse: “People who are receiving threats – they’re just going to stop talking … the more silent they are, the louder the other side gets, or seems to get and the more that happens, then you get into this spiral of people deciding to stop engaging.”

This is partly why Rappler began #NoPlaceForHate – to help prevent the erosion of public discourse and bridge online echo chambers.

Futurists warn of even greater disruption ahead: exponential change to our societies because of technology.

This, they add, is when humanity becomes even more important. (READ: #ThinkPH: Man must learn to dance with machines)

“Technology has no morals. Technology has no values.” added Santos, “Technology is too neutral for us to be relying on it alone.”

Tech platform or media?

Facebook CEO Mark Zuckerberg had clear views about Facebook’s role when asked last August: “We’re a technology company. We’re not a media company.”

Still, given the central role Facebook is playing today in disseminating news, and the advent of political advocacy pages masquerading as credible sources of information on it, the social media giant may have to evolve to take on the standards and ethics that defined the fourth estate. (READ: To curb hoaxes, Facebook must accept it’s a media company)

It seems clear the world has new gatekeepers.

“So I think it is incumbent upon these companies to begin to think of themselves as news companies,” Herbst told journalists. “If they don’t take this position now, they will be forced to do so in the future.”

“Facebook is a system,” countered technologist Stephanie Sy, “and systems express moral and ethical behavior through what they incentivise and what behavior they disincentivize … those of us in tech, we also have a responsibility to be part of this discussion and to build systems that incentivize ethics.” – Rappler.com

Add a comment

How does this make you feel?

There are no comments yet. Add your comment to start the conversation.