SUMMARY

This is AI generated summarization, which may have errors. For context, always refer to the full article.

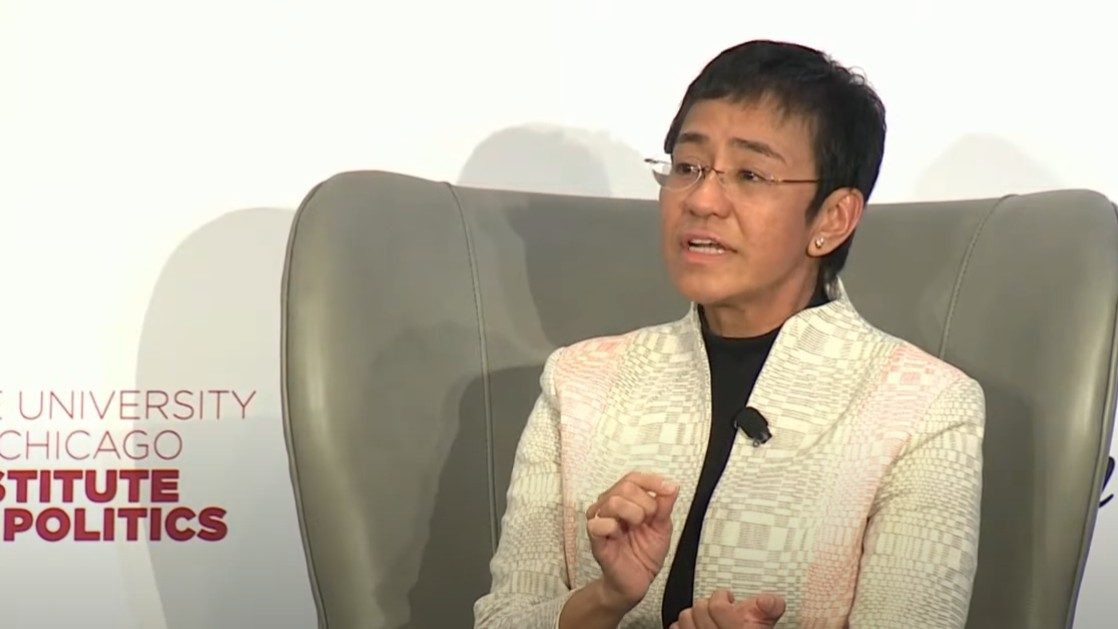

Rappler CEO and Nobel Laureate Maria Ressa was a keynote speaker at American magazine The Atlantic’s Disinformation and the Erosion of Democracy event, together with other esteemed guests such as former US President Barack Obama, former United States Director of the Cybersecurity and Infrastructure Security Agency Chris Krebs, and US Senator Amy Klobuchar.

The three-day event from April 6 to 8, US time, explored “the roots and scope of the problem [of disinformation], the next-generation threats posed by new technological advances, and the tools and policies required to neutralize them.”

Ressa’s session was held on the first day of the event, April 6. In her hour-long session that also included a Q&A with The Atlantic executive editor Adrienne Lafrance, Ressa discussed the information operations in the Philippines starting in 2016 making use of a network of fake accounts to favor President Rodrigo Duterte’s agenda, and seeding narratives to take aim at critical media like Rappler. Later, similar disinformation tactics would be used by leading presidential candidate Ferdinand Marcos Jr. in his attempt to return to Malacañang. If successful, the disinformation campaign would have had something to do with it, Ressa said.

Ressa also met Barack Obama on the sidelines.

The session can be watched on YouTube below. Ressa’s session starts at the 13:48 mark.

Here are key quotes from Ressa during the event:

- “This is my 36th year as a journalist. I’ve spent that entire time learning how to tell stories that will make you care. But when we’re up against lies, we just can’t win because facts are really boring – hard to capture your amygdala the way lies do.”

An MIT study in 2018 found that lies laced with hate and anger spread faster than facts. Ressa in the Q&A later would add that biology is part of the reason that this is so. People experience a level of emotional arousal when seeing content on platforms. Often, the level of arousal is very high if the piece of content stirs emotions of anger, leading to actions such as sharing the content. Disinformation architects know this; they know how to manipulate emotions, and know just which ones to trigger to make a piece of content, a lie, spread fast.

- “We only debate content moderation. That’s like, you’re looking at a polluted river and you’re only looking at the test tube of water versus where the pollutant is coming from. I call it a virus of lies.”

Ressa explained that to begin to solve the problem of disinformation on the tech platforms’ end, we can’t just look at content moderation, which she compared to merely looking at a test tube of water from a polluted river. Instead, we also have to look further upstream where the “algorithmic amplification” is happening – the operating system that determines which piece of content spreads; and the microtargeting, which is “opinion in code” similar to an editor making a content decision exponentially multiplied millions and millions of times.

And even further upstream, Ressa explained, is where “our personal data has been pulled together by machine learning for a model of you that knows you better than you know.” We have to take back our data, Ressa said, from the business model that is surveillance capitalism – personal data sold to the highest bidder.

- “I believe we need to get legislation in place, that our data should be ours, that Section 230 should be killed because in the end, these platforms, these tech platforms, are not like the telephone. They decide. They weigh in on what you get. And the primary driver is money. It’s surveillance capitalism. So the microtargeting it’s like going to a psychologist and telling your psychologist your deepest, darkest secrets, and then that psychologist goes around and says ‘Yo, who wants this? Who’s the highest bidder?’“

Ressa praised European Union legislation, the Digital Markets and Digital Services Act, that will put more pressure on tech companies to police their content, as well as face stricter anti-trust regulation. The US, as acknowledged by LaFrance as well, is generally believed to be behind the EU in big tech regulation. One of the more contentious pieces of US legislation right now is Section 230, which, put simply, makes tech platforms not liable for what users post on their platforms.

Ressa, in the quote, also illustrated how violative the user-tech platform relationship is currently when it comes to personal freedoms such as the right to privacy – especially in a setup where the private data is being used to reap profits for the tech platform.

- “How do you look at the impact of disinformation? When you pound it, you can change history in front of your eyes. That’s what happened to [the Philippines]. And if Ferdinand Marcos Jr. wins [in the 2022 presidential elections], partly, that will be because of [disinformation]. So, this is emblematic of the battle for facts. That’s why the battle for facts is critically important.”

Social media has been a key pillar in the Marcos’ playbook of rewriting history, using networked propaganda, false narratives, content seeded on YouTube, attempts to create a network of accounts on Twitter, a Chinese fake account network with a focus on Senator Imee Marcos, to name a few.

Experts have also stated that Marcos Jr has been a top beneficiary of disinformation through the years.

- “No president has really ever liked me completely in the Philippines. That’s okay, I don’t mind. So long as they respect you or they think you’re fair – that’s all we’re after. But today is so much different. It’s not about you [the media company], they’ll create their own [outlets].”

“Like Alex Jones in the States, we now have a group in the Philippines. This is the preacher of President Duterte. His name is Apollo Quiboloy. He’s wanted by the FBI for sex trafficking. But he has one of the largest Facebook pages for disinformation, and he just received a television franchise from the government in a midnight deal. So what they do is instead of actually dealing with a journalist, they just create their own,” Ressa said.

In this kind of information ecosystem where new channels can be created in a snap, people’s preference for who to listen to or watch will not be based on who’s factual. It’ll be identity-based; it’ll depend on who you like more for one reason or another, and not because they are a journalist striving to get the facts out there.

“How do you protect the facts?” Ressa asked. – Rappler.com

Add a comment

How does this make you feel?

![[DECODED] The Philippines and Brazil have a lot in common. Online toxicity is one.](https://www.rappler.com/tachyon/2024/07/misogyny-tech-carousel-revised-decoded-july-2024.jpg?resize=257%2C257&crop_strategy=attention)

![[Rappler’s Best] US does propaganda? Of course.](https://www.rappler.com/tachyon/2024/06/US-does-propaganda-Of-course-june-17-2024.jpg?resize=257%2C257&crop=236px%2C0px%2C720px%2C720px)

![[OPINION] You don’t always need a journalism degree to be a journalist](https://www.rappler.com/tachyon/2024/06/jed-harme-fellowship-essay-june-19-2024.jpg?resize=257%2C257&crop=287px%2C0px%2C720px%2C720px)

There are no comments yet. Add your comment to start the conversation.